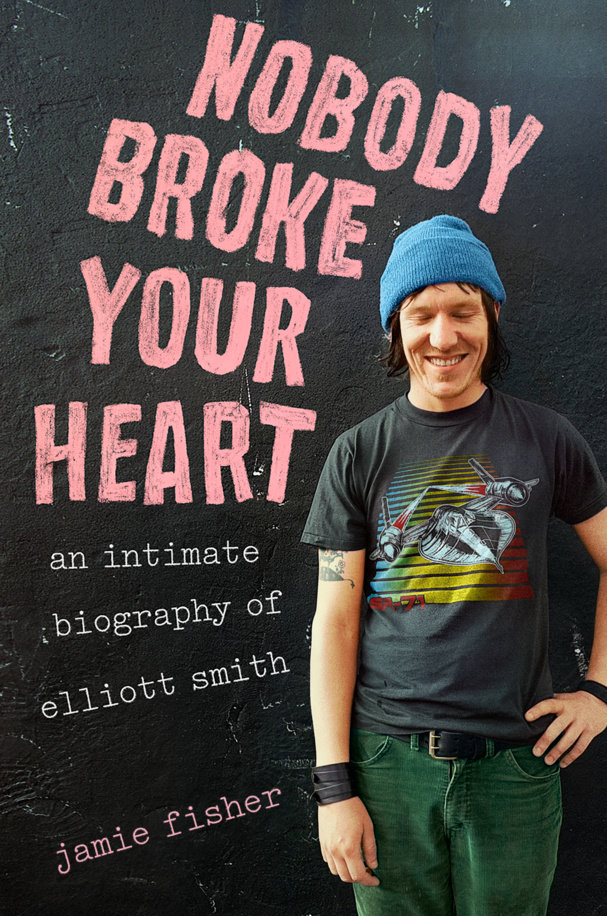

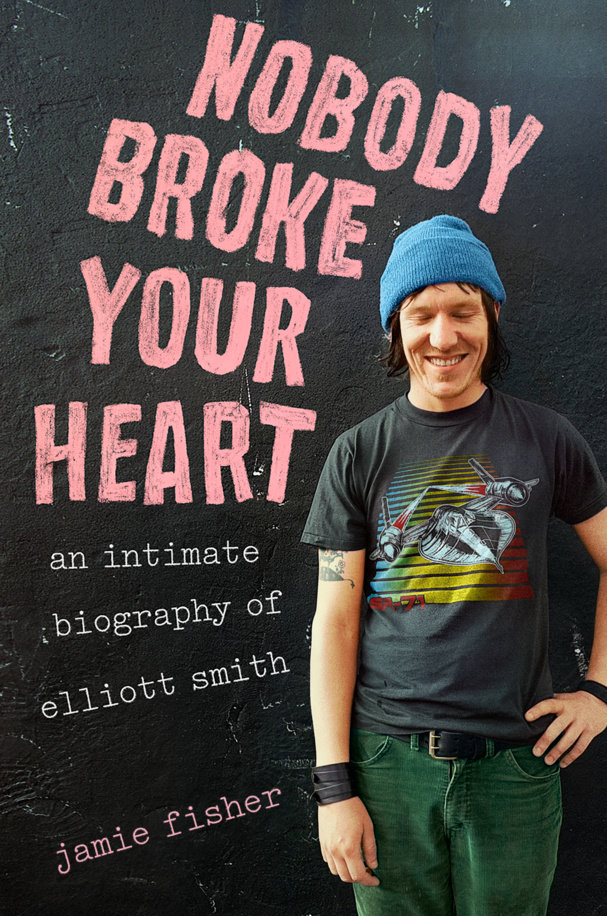

I'm reading a coming biography about Elliott Smith, named Nobody Broke Your Heart: An Intimate Biography of Elliott Smith.

So far, I've only read the introduction. It bears the hallmarks of a great fucking book.

Twenty-three years after his death, Elliott still isn’t particularly well known, or well understood, but he is terribly loved. The task of understanding and preserving his legacy has become a collective project. There are YouTube accounts like I Remember Elliott; his old fan site Sweet Adeline, defiantly mired in Web 1.0; oral-history blogs like so flawed and drunk and perfect still; Smiling at Confusion, a site for posting guitar tabs, guidance on fingerings and chords. Fans share bootleg recordings and unreleased songs, reflections on his lyrics or entreaties for help understanding them, and they speculate darkly on his death. Below videos you’ll find hundreds of comments, people gushing over Elliott’s fingerpicking, arguing about whether he’s on something at this concert or just tired but clean, thanking him for accompanying them through depression or addiction, for making them feel less alone.

What is immediate, what is human, that is love.

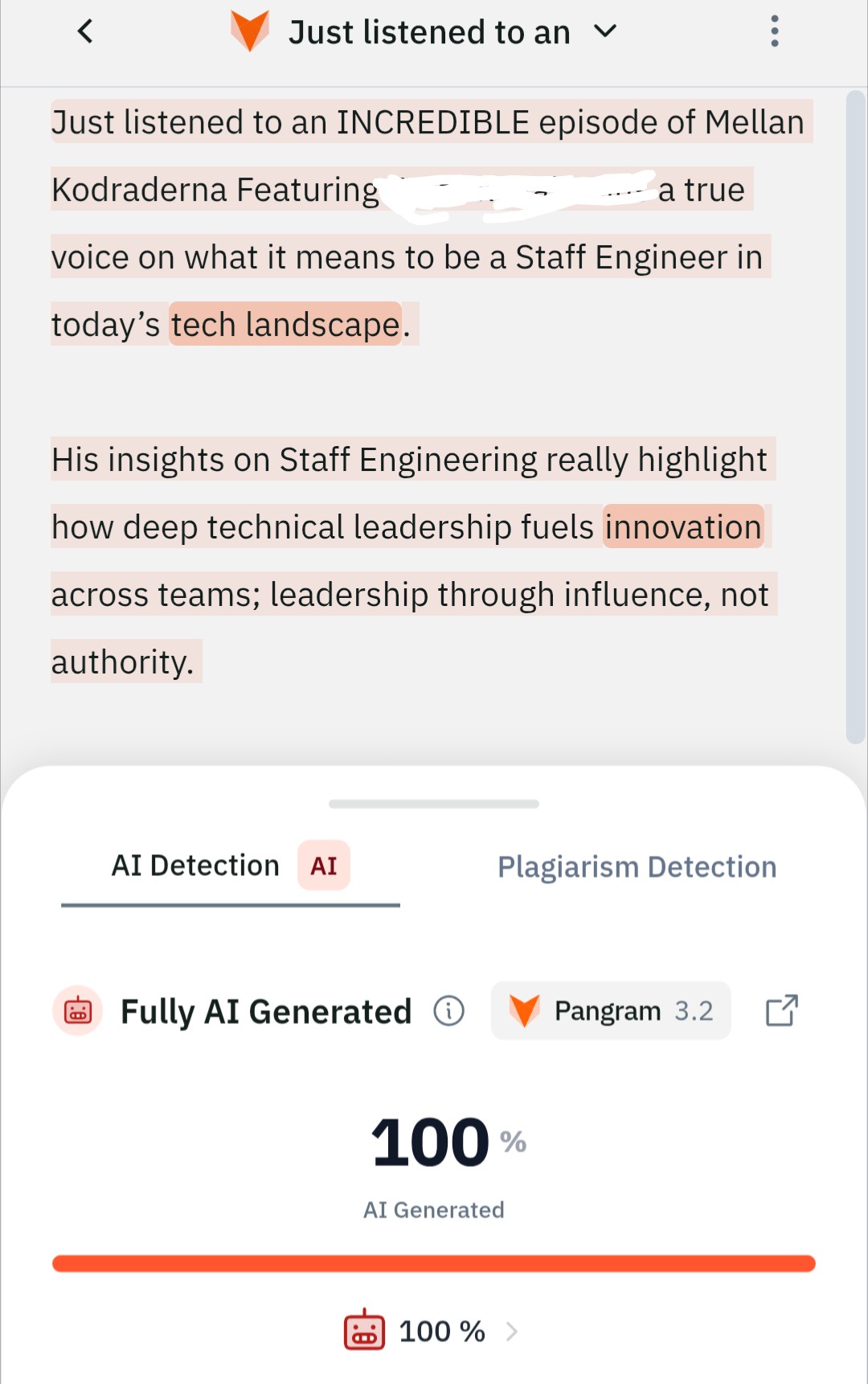

Recently, a friend asked me to run some of their works through AI to see what it would create. Instantly it generated some seemingly worthwhile stuff but in actuality, AI is autocorrect on steroids. My friend isn't very knowledgeable around AI but they produce stuff that's, frankly, some of the best I've ever read and heard in their 'fields'.

Three recent articles about AI:

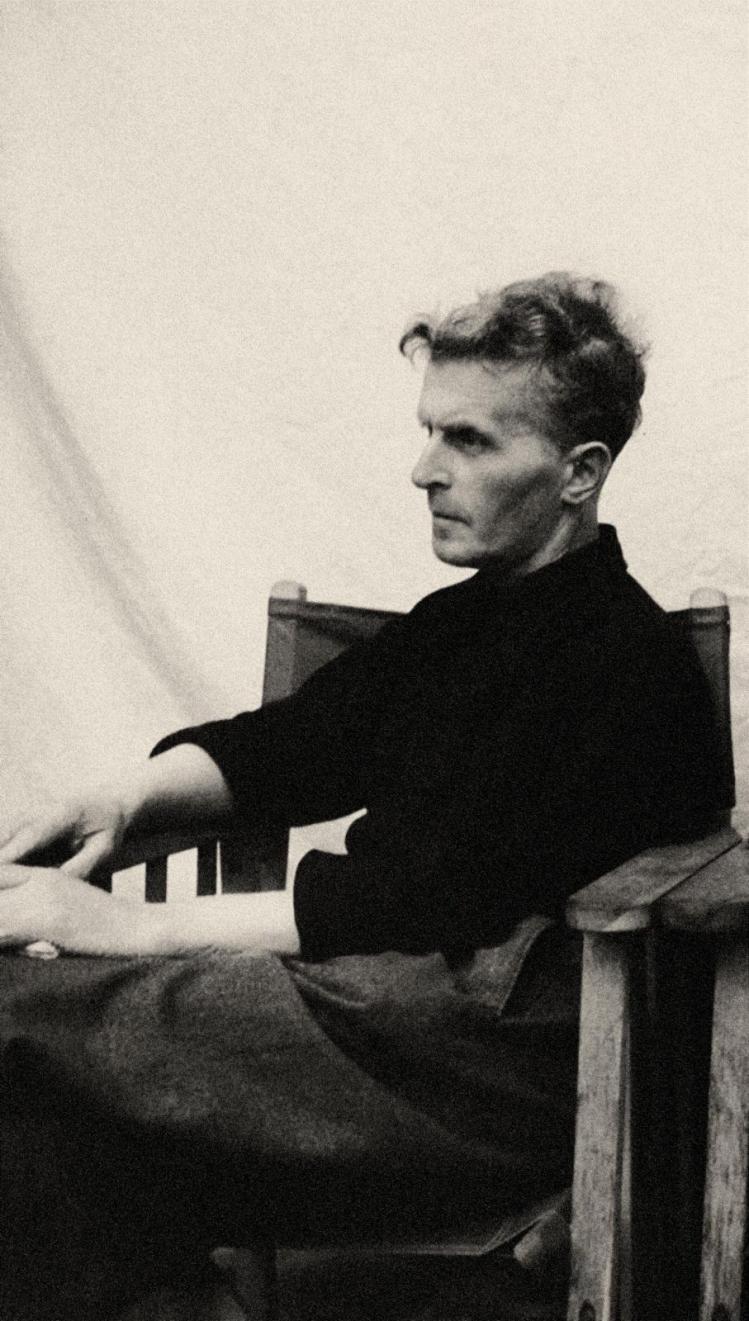

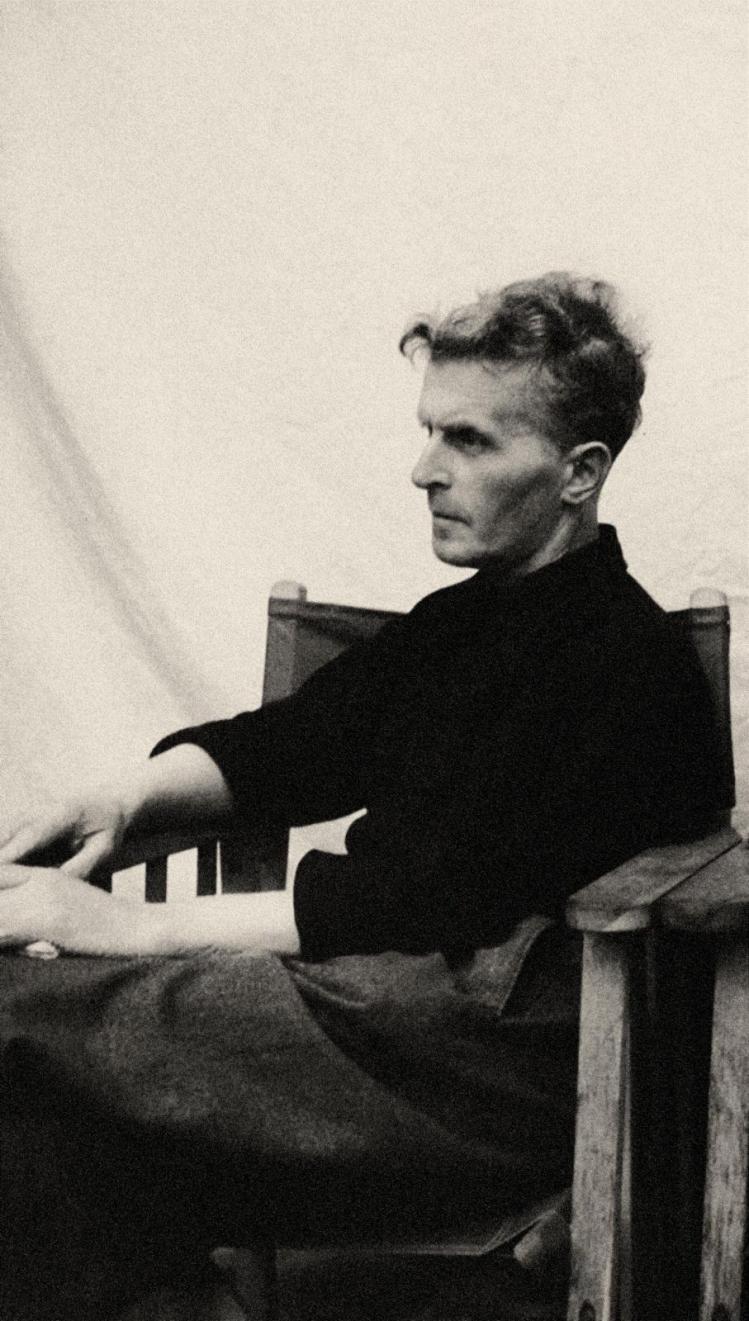

Ludwig Wittgenstein

Ludwig Wittgenstein

Wittgenstein is one of my favourite modern philosophers. I highly recommend Ray Monk's beautiful Ludwig Wittgenstein: The Duty of Genius.

A recently-published article on large language models (LLMs) as they relate to Wittgenstein's views on language, semantics, and mathematics is very interesting indeed.

When Wittgenstein referred to the “beginning of the end of humanity,” he was not envisioning sci-fi cataclysms on the order of The Matrix or The Terminator or even Dr. Strangelove. He was referring to the end of humanity not primarily in terms of its biological survival, but in terms of what he called the “form of life” we inhabit. That form of life is threatened not so much by industrialization, nukes, robots, or AI agents as by a way of thinking that lowers human life to the plane of science and technology. Wittgenstein’s attempt to draw attention to that way of thinking—and dissuade us from it—is of the utmost importance in an era where the developing AI ideology threatens to further distort our understanding of how we use language and how we live.

A more in-depth excerpt from the wondrously and sharply written article:

The parts of the Investigations where Wittgenstein probes our concepts of thinking and understanding can help us escape the conceptual muddles that plague discussions and debates over AI and so-called “artificial general intelligence.”

“One of the great sources of philosophical bewilderment,” according to Wittgenstein, arises when a noun like “meaning” or “number” “makes us look for a thing that corresponds to it.” We assume that our language works principally by way of reference, so that where there is a noun there must be a thing it points to. But referring to objects is just one of language’s many functions or games. Instead of looking for the things behind our words, Wittgenstein proposes studying the grammar of the language game: the role words play—and don’t play—in these activities.

When we reflect on words like “meaning,” “thinking,” “understanding,” and “reasoning,” Wittgenstein argues, a certain picture immediately enters our heads: an internal process existing in the brain or mind that enables or somehow gives life to outwardly meaningful expressions. But, Wittgenstein asks, “What really comes before our mind when we understand a word?” Is it a kind of picture, so that I see an image of a pen in my mind’s eye when I hear the word “pen”? Do I then compare my inner picture to my experience of the outer world in order to determine whether it would be appropriate to use the word “pen”? Does some correspondence between this internal process and my expression “pen” somehow constitute the meaning?

The idea of meaning as an internal process seems unproblematic at first, even unavoidable, but, as Wittgenstein shows, it’s not clear what role such a process would actually be playing. He asks his reader, for example, to “say: ‘Yes, this pen is blunt. Oh well, it’ll do.’ First, with thought; then without thought; then just think the thought without the words.” Having conducted these absurd self-examinations, Wittgenstein asks us to reflect, “What did the thought, as it existed before its expression, consist in?”

His point is that our intuitive idea of meaning as an inner correlate of our outward expressions breaks down when it is taken as something like a scientific theory for what’s really going on when we use language. This failure shouldn’t surprise us. Our language did not evolve for scientific or metaphysical purposes, but just to help us make do and get along in the real world.

The picture of thought as an internal process accompanying our use of language is just that: a picture. It is unproblematic insofar as it arises in everyday language, as when I clarify a misunderstanding by telling you, after you’ve mistakenly handed me a red pen on the desk, “No, I meant that blue pen on the bookshelf.” But that sentence is not a claim about the state of my brain a moment ago; it could not be confirmed or disconfirmed by some kind of retroactive brain scan. It’s merely a way to advance a practical project that has gone off the rails. If it’s anywhere, meaning is in that project, not in my brain.

Of course, we might imagine that some industrious cognitive scientist equipped with the latest in brain-imaging technology might actually try to establish a causal connection between a particular brain state and the correct usage of the word “pen.” But even in that case, would it be correct to say that with a coordinated set of brain images we’ve in some sense located the meaning of the word “pen”? In what sense would the internal state that shows up on the scan explain the use or understanding of the word? Would it be analogous to the way the properties of an internal combustion engine can help explain the forward motion of a car?

This example shows how strange it is to use an examination of brain states instead of actual behavior as a criterion for ascribing understanding. If we’re looking for understanding and meaning, Wittgenstein thinks, we will find them in the various things we do with language and not in some internal process that accompanies our use of language.

This is just one of the strategies Wittgenstein uses to try to dissuade his readers from a mechanical, pseudoscientific understanding of language as it is embedded in human practices. The Investigations doesn’t attempt to refute this false understanding by formal, analytic argumentation the way a scientist or science-imitating philosopher might. Wittgenstein instead tries to show its limitations. His makeshift strategies—describing language games, imagining dialogues, conducting thought experiments, and drawing analogies—show how the scientific worldview has strayed from narrowly defined areas where it actually has purchase and started to distort our understanding of domains where it doesn’t belong.

It all reminds me of Noam Chomsky's discussion with Michel Gondry in the documentary Is the Man Who Is Tall Happy?. The documentary is packed with discussion on matters like universal grammar, but I remember Chomsky talking about how a small child can see a tall man who's happy; the child immediately knows that man is happy, and can draw parallels that allow the child to equally immediately know that not every man who is tall is happy, nor that every man is happy. The two-year-old Watumull/Roberts/Chomsky article The False Promise of ChatGPT says much about this.

It's not hard to know where happiness is found. To experience happiness is another thing, and AI won't help us there.

#ArtificialIntelligence #music #ElliottSmith #NoamChomsky #LudwigWittgenstein

Ludwig Wittgenstein

Ludwig Wittgenstein